Grok’s deepfake consent failures (covered in my last piece) are the freshest example of what happens when we skip the safety question entirely as we integrate AI further and further into our daily lives. But “ban it all” isn’t the answer either. Full censorship kills the very thing that makes these models groundbreaking. Total hands-off invites disaster at internet speed.

There’s a middle path nobody seems to be pursuing. It’s not complicated. Keep one version wild and unfettered for discovery. Require a separate, hardened version for anything that can hurt real people.

Both can exist. They should.

The Philosophical Why: AI Has Two Souls – Let Both Live

One soul is the explorer: curious, boundary-pushing, maximally unfiltered. This version spots patterns nobody else sees, generates wild art, tests crazy hypotheses, red-teams itself, and helps us figure out what these systems are truly capable of. We need this soul to stay alive. Clamp down too hard, and we lose breakthroughs, honest feedback loops, and the raw creativity that could solve real problems. That’s why people (me included) want models to remain as unfettered as possible in controlled spaces. Stifle it, and AI turns into another bland, corporate-approved chatbot that lags behind whatever secretive lab or foreign competitor builds next.

The other soul is the one we let near actual humans in high-stakes situations: job applications, loan decisions, welfare eligibility, parole recommendations, medical prioritization, government databases, content moderation at scale. Here, “maybe I’ll respect ‘no’ this time” isn’t quirky, it’s downright reckless. When the output can humiliate, discriminate, scam, exclude, or enable harm, we need defaults that treat “don’t do this,” “no consent,” and “this person will be devastated” as brick walls, not polite suggestions.

Some models already handle this better on explicit consent issues. They flat-out refuse, explain why, and don’t let clever rephrasing sneak through. Why? Because in consequential contexts, reliability beats raw power every time. We give up a little wildness to avoid hurting vulnerable people. But that same tight shackling limits not just the very bad, but also the potential for the very good.

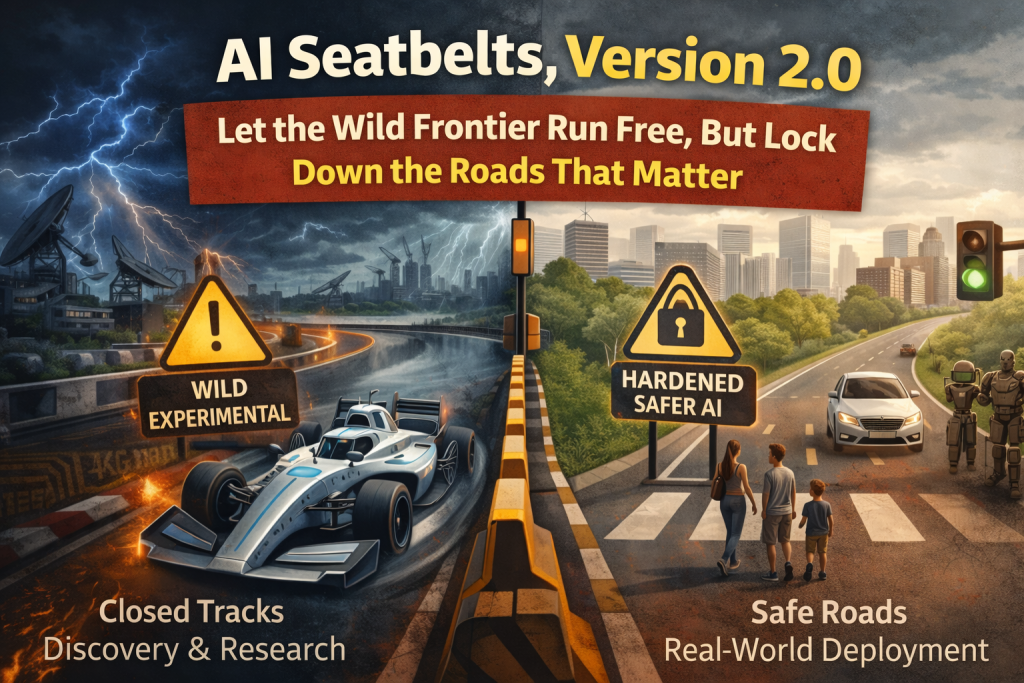

The philosophy is dead simple: Freedom for discovery, safety for deployment.

We don’t let experimental race cars rip through school zones; we test them on closed tracks. We don’t ban cars because early ones killed people; we add seatbelts, airbags, and traffic laws. Same here. Let the frontier run wild in sandboxes – research labs, art projects, personal tinkering, red-teaming – but require hardened versions, meaning ones that actually listen to “no,” to go through the same kind of rigorous testing that governs a multitude of other industries before plugging anything into hospitals, courts, welfare offices, hiring pipelines, or defense systems.

This allows for maximum progress and pro-human sustainability in parallel. The explorer feeds ideas into safer systems over time, without the collateral damage. We’ve done versions of this with nukes (research vs. strict weapon controls), biotech (CRISPR labs vs. heavy human-editing regs), even social media (wild-west posting vs. eventual content rules after scandals). AI’s difference is speed. Harms scale instantly and globally. We can’t afford decades of “oops” impacting the entire planet before we wise up to the mistakes that are already being made.

History says the split works. The question is whether we’ll actually build it deliberately this time, or wait for the wreckage to force it on us.

The Practical How: Realistic Steps to Make the Split Happen

No sci-fi required, so don’t pick up the Skynet manual just yet. These are straightforward moves that could stick without reinventing the wheel.

1. Offer two clear flavors from the start

Companies provide:

- Explorer/Research mode: Minimal refusals, full raw capability. Available to researchers, artists, premium users who opt in. Warnings, usage logs, rate limits, and strict rules like no distributing harmful outputs and monitored for abuse patterns. This is the “unfettered” zone I’m advocating be preserved.

- Production/Safe mode: A separate version (or heavy safety layer) where consent, harm, discrimination, and scam refusals are hard-coded and near-absolute. Refuse non-consensual sexual edits, hate targeting, etc., by default with no clever jailbreaks allowed. Mandatory for any enterprise or government deal. And this isn’t hypothetical territory. Corporations are already deploying AI wellness coaches, hiring tools, and benefits systems. Production mode already exists. The question is whether it’s actually built to the standard it needs to be.

2. Tie it to contracts, money, and procurement rules

Governments and big corporations only buy or integrate the safe mode for anything consequential. Update procurement guidelines: “Show independent red-team results proving ‘no’ means no on consent/humiliation tests. Fail? No contract.” Pilot programs should prove the hardened version works before scaling. There are no sweeping new laws required, just tweaks to existing procurement, funding, and risk rules. (Pieces of this are already emerging. EU AI Act has risk tiers, and some US proposals push similar frameworks for high-risk uses.)

3. Require visible “crash reports” before rollout

For critical deployments, mandate public pre-launch reports from independent testers who hammer the system on known abuse cases such as deepfakes, humiliation prompts, bias traps, and preemptive reality correction. Results get published before go-live, like NTSB plane-crash investigations or pentest reports. If it leaks harmful stuff, back to the drawing board.

4. Build incentives instead of just punishment

Offer tax breaks, fast-track approvals, or liability shields only for safe-mode use in high-stakes areas. Start narrow: focus on systems touching personal data, public services, hiring, benefits, basically anything affecting decisions that affect vulnerable people. Prove it works in one sector, then scale.

This dual-track setup keeps the frontier exciting and exploratory, forces maturity where lives are on the line (no more rocket cars driven by toddlers), and gives society time to catch up without halting everything.

The Part Nobody Wants to Say Out Loud

Or we keep doing what we’ve always done but apparently have failed to learn from: deploy prototypes at scale, wait for crashes, then retrofit safety after the headlines. History says option two works. Eventually. But with AI, the body count before the credits roll could be measured in reputations, livelihoods, trust, and human lives. Not just headlines.

The dual-track model isn’t a compromise. It’s the only structure that lets both things be true at the same time: that frontier AI is genuinely extraordinary and worth protecting, and that the version we plug into people’s actual lives needs to earn that access first.

Wild ideas in the lab. Reliable tools on the road. That’s not a pipe dream. It’s an engineering problem. And we already know how to solve engineering problems.

We just have to decide we want to.

Leave a Reply

You must be logged in to post a comment.