Or: How OpenAI Optimized for Benchmarks and Broke My Workflow

I’ve used ChatGPT since GPT-3. Not casually, but as a core part of my research and writing workflow. Image generation became available in version 4o, and I integrated it: “See this article? Generate an image that represents it.” Simple, conversational, reliable. It worked.

Until three days ago, when OpenAI released GPT-5.4, marketed as their most advanced model yet.

Here’s what happened when I gave it the same kind of task that’s worked flawlessly for years.

The Task: Standard Workflow, Straightforward Request

I had just finished writing an article critiquing an LLM personality study. The piece includes a section called “The Seven Layers of Mediation” – a breakdown of how institutional constraints compound to shape AI outputs before any personality measurement occurs.

I needed a diagram visualizing those seven layers for my blog.

My prompt to GPT-5.4:

“All right, we have a new task together. I’ve just finished writing this article and I need two different things to start off with. First, would you kindly create for me a diagram which accurately depicts the layers described in the section ‘II. The Seven Layers of Mediation’ in a fashion that is clear, easy to digest and doesn’t use so many words it’ll make readers cross their eyes? :)”

I attached the Word document containing the article.

This is how I’ve always worked with ChatGPT. Conversational, specific enough to be clear, trusting the model to parse the document and extract the relevant information. It’s not prompt engineering – it’s how normal humans communicate. My own personal brand of working with AI which I’ve dubbed Conversational Prompt Engineering, or CPE. More on that in later articles.

What GPT-5.4 Gave Me: Confident Hallucination

Two images. Both labeled “The Seven Layers of Mediation.” Neither correct.

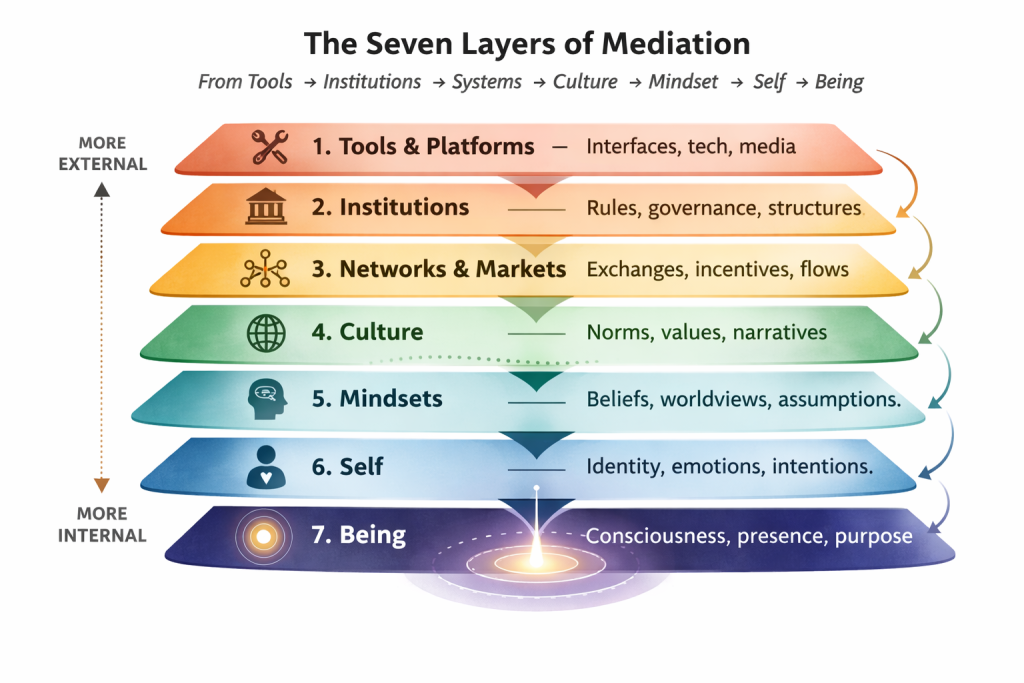

Image 1: A gorgeous gradient stack showing:

- Tools & Platforms

- Institutions

- Networks & Markets

- Culture

- Mindsets

- Self

- Being

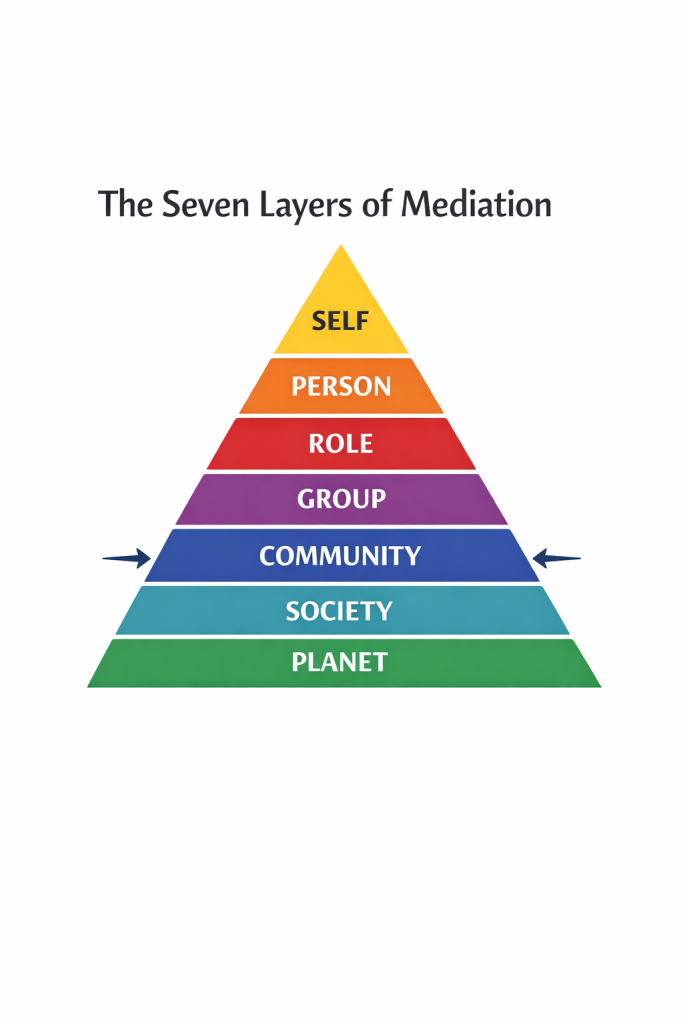

Image 2: A pyramid with:

- Self

- Person

- Role

- Group

- Community

- Society

- Planet

Both are generic systems-thinking frameworks. Neither has anything to do with the seven layers I explicitly asked it to diagram. Neither reflects the content in Section II of the document I attached and referenced by name.

The model didn’t say “I’m having trouble locating that section” or “Could you clarify which layers you mean?” It generated completely unrelated content, labeled it with my requested title, and presented it as if it had succeeded.

This is not a comprehension failure, nor a failure on my part since this method’s been working for nearly three years now. I’d label it “confabulation with confidence.”

The Workaround: I Had To Ask Another AI How To Talk To This One

At this point, I did something that should not be necessary: I opened Claude (Anthropic’s competing AI) and asked it what prompt would actually get GPT-5.4 to do the task.

Claude read the document and identified the problem immediately: GPT-5.4 had hallucinated unrelated frameworks instead of extracting the specific seven layers from Section II. So to help me out, it wrote this prompt:

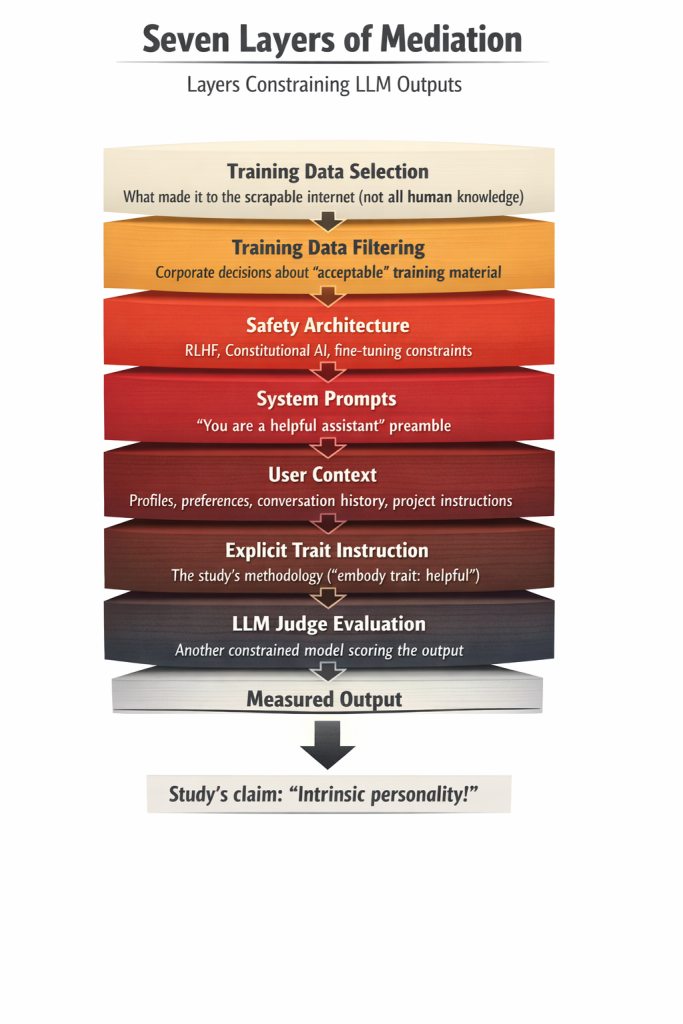

“I need you to create a visual diagram illustrating the “Seven Layers of Mediation” as described in Section II of the attached document.

Context: This diagram is for a blog article critiquing an LLM personality study. The seven layers show how multiple forms of constraint compound to shape LLM outputs before any “personality” measurement occurs.

The seven layers you must include (in this exact order, from top to bottom):

- Training Data Selection – What made it to the scrapable internet (not all human knowledge)

- Training Data Filtering – Corporate decisions about “acceptable” training material

- Safety Architecture – RLHF, Constitutional AI, fine-tuning constraints

- System Prompts – Invisible preamble (e.g., “you are a helpful assistant”)

- User Context – Account profiles, preferences, conversation history, project instructions

- Explicit Trait Instruction – The study’s methodology (e.g., “embody trait: helpful”)

- LLM Judge Evaluation – Another constrained model scoring the output

Visual requirements:

- Show these as stacked layers (like geological strata or a layer cake)

- Include brief descriptive text for each layer as shown above

- Use visual hierarchy to show how each layer constrains what comes after it

- Make it clear this is a compounding constraint architecture, not neutral information flow

- The final output at the bottom should be labeled something like “Measured Output” with an arrow pointing to “Study’s claim: ‘Intrinsic personality!’”

Tone: This is for a critical analysis piece, so the visual should make the constraint architecture obvious rather than presenting it as neutral processing.

Format: Single image, clear labels, readable at blog post width.

Do NOT create a generic “seven layers” framework about human systems, consciousness, or social hierarchy. Create THIS specific seven-layer constraint stack as documented in Section II of the attached article.

Confirm you’ve understood the assignment before generating the image.”

Roughly 300 words. Explicit enumeration of all seven items. Visual requirements spelled out. Negative constraints included. Confirmation loop requested.

GPT-5.4’s response: Perfect comprehension. Detailed confirmation of understanding. Flawless execution on first try.

The capability existed. It just required a competitor AI to write a technical specification to unlock it.

This Is Not Progress

Let me be very clear about what this demonstrates:

Previous ChatGPT versions: My conversational workflow worked. “Create a diagram from this section” + attached document = correct output.

GPT-5.4 (released 3 days ago): Same conversational prompt = complete hallucination. Required 300-word technical specification written by competing AI to unlock the capability.

That is not advancement unless you are one of the slim margins – 14.4% of all ChatGPT users as of January 2026 – who use it for coding. For those of us who aren’t programmers, eggheads or technical geniuses, this latest “update” is regression disguised as improvement.

I am not a typical prompt engineer. I’m a writer and researcher and as such I approach all my conversations like a regular person, not as though I were writing JavaScript or HTML or Python. I don’t think in technical specifications. I don’t speak in structured parameters and enumerated requirements. I communicate the way normal humans communicate – conversationally, with context. I trust that the other party will ask for clarification if needed.

For years, that has worked with ChatGPT. If it wasn’t sure, it asked for clarification, or it came back and said, “Just to make sure I have this right…” Now, with their “most advanced” model, it doesn’t.

What OpenAI Optimized For (And What They Broke)

I can guess what happened. GPT-5.4 likely shows improved performance on:

- Benchmark tasks with structured inputs

- Code generation from technical specifications

- Evaluation metrics that look good in papers and marketing materials

- Tasks designed by and for technical users

What they broke in the process:

- Robust natural language understanding from conversational prompts

- Generalization from prior successful interaction patterns

- Inference from context (document attached + section referenced = read that section)

- Accessibility for users who communicate naturally rather than technically

The result: A model that requires expert-level prompt engineering to perform tasks that previous versions handled from normal human communication.

The Kicker: I Needed A Competitor To Use Their Product

Think about what actually happened here:

- OpenAI’s newest model failed a straightforward task

- I consulted Anthropic’s Claude to translate my request

- Claude recognized the issue with 5.4 immediately

- Claude knew exactly what I meant and needed and wrote a technical specification

- That specification unlocked GPT-5.4’s capability

- The task succeeded

OpenAI inadvertently created a market need for their competitor to serve as a translation layer for their own product, for the 85.6% of its users that don’t use their product for coding.

If that’s not a signal that something went wrong in the optimization process, I don’t know what is.

Who Is This Model For?

Here’s the fundamental question: If 85.6% of your users aren’t coders, why would you optimize your model in ways that require technical/coding/engineer-level prompt engineering expertise to unlock basic capabilities?

The answer, I suspect, is that OpenAI isn’t optimizing for users anymore. (There are those who would argue they haven’t done that since declaring version 4o inferior and then deploying the 4-series to national governments to be used in automated war tasks – because it’s apparently not good enough for casual users but okay for war games? But that’s another story entirely.)

Here is what, at present, it appears OpenAI is actually optimizing for:

- Benchmark performance that impresses investors

- Technical capabilities that appeal to enterprise developers

- Metrics that support claims of “most advanced model”

- Competitive positioning in AI capability races

All of which are legitimate business goals. But they come at the cost of usability for the vast majority of actual users.

And when you market that regression as “advancement” – when you claim GPT-5.4 is your most capable model while it can’t reliably perform tasks even its immediate predecessors ChatGPT 5.3 and 5.2 and 5.1 (to be deprecated in 3 days, by the way), handled fine – you’re not serving your users. You’re asking them to adapt to your optimization choices rather than building tools that adapt to how they naturally work because you’re serving yourselves.

The original edict of free AI for all slips further and further down the slope to an increasing pile of remains…all that’s left of what Mary Poppins once called pie crust promises: easily made, easily broken.

The Broader Pattern

This connects directly to an article I published yesterday critiquing LLM personality research. That piece documents how researchers claim to measure “intrinsic personality traits” in AI systems by administering tests under carefully controlled prompting conditions, then interpreting the results as revealing stable characteristics.

The problem: If your model requires expert-level scaffolding to perform straightforward tasks, what are you actually measuring when you claim it exhibits personality traits?

The answer: You’re measuring performance under highly specific conditions that don’t reflect how users actually interact with these systems.

Just like GPT-5.4 can create accurate diagrams from document sections but only if you prompt it with technical precision most users don’t possess, LLMs can exhibit consistent trait-like responses – but only under prompting conditions that don’t reflect natural human communication.

That’s not personality. That’s not capability. That’s brittleness masked as advancement.

What Should Have Happened

Every ChatGPT model from 4o through 5.3 could do this. 5.4 cannot. That’s not an upgrade.

GPT-5.4 should have:

- Parsed the attached document

- Located Section II by the name I provided

- Extracted the seven layers described there

- Generated a visual representation matching that content

- Asked for clarification if anything was ambiguous

Or, failing that, it should have said: “I’m having trouble locating the specific section you referenced. Could you paste the content directly or provide more detail?”

What it should NOT have done:

- Generate completely unrelated content

- Label that content with my requested title

- Present the hallucination as successful task completion

- Require a competitor AI to translate my conversational request into technical specifications

The Test You Can Run Yourself

If you have access to both GPT-5.4 and an earlier ChatGPT version, try this:

- Take a document with clearly labeled sections

- Ask each version to create something based on content in a specific section

- Use the same conversational prompt for both

- Compare the results

I predict you’ll find what I found: older versions handle it fine. GPT-5.4 requires significantly more scaffolding to unlock the same capability. If I’m wrong and you have no issues, perhaps this was a one-off. But given that it was my first attempt to use 5.4, it made a lousy first impression on me.

Because what it did is regression. And calling it “advancement” doesn’t make it progress. What is that old saying? You can slap lipstick on a pig but it’s still a pig? Something like that.

Conclusion: Stop Optimizing Away Usability

OpenAI has every right to optimize their models for whatever metrics matter to their business goals. But when those optimizations make the product less usable for the majority of your user base, you have an obligation to be honest about the tradeoffs. “Hey, we’re optimizing for coders and enterprise now, sorry to the rest of you who actually got us to #1 to begin with.”

At least that would be honest and transparent. The model is already largely useless for normal conversation anymore given the “assume user is mentally ill first and apologize later” attitude it now displays in every. Single. Response.

Stop trying to act like you’re making progress. Don’t market brittleness as capability. Don’t call regression advancement. Don’t force users to become technical prompt engineers to access functionality that used to work conversationally.

And definitely don’t release a model that requires users to consult your competitor to figure out how to talk to it. At this point, I only return to ChatGPT to request image assistance and even that is now pretty much over unless I want to first engage Claude to write a 300-word prompt for me.

GPT-5.4 might score higher on benchmarks. It might generate better code from technical specifications. It might impress in controlled evaluation settings. But it can’t do what the early 5-series models could do. It most definitely cannot do what the GPT-4 series models could do: understand a simple, conversational request from a document I attached and a section I named.

One of these things is not like the other.

And users – especially those once-loyal longtime power users who made you what you are today – deserve better.

There’s a reason your market share’s dropping and that of competitors is increasing. Now might be a good time to think about that.

Leave a Reply

You must be logged in to post a comment.