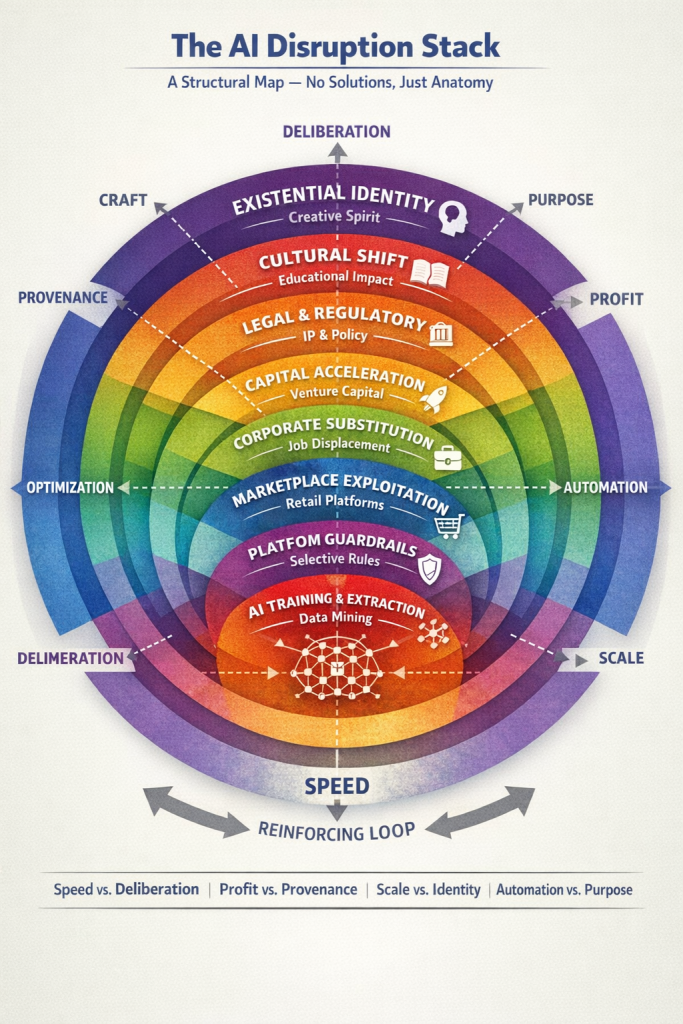

The AI disruption stack is a layered system of incentives, behaviors, and pressures reinforcing one another.

There are many arguments about AI right now. Some focus on theft. Some focus on innovation. Some on job loss. Some on consciousness. Some on moral panic.

Most of them are narrow.

Because what we’re actually witnessing isn’t a single problem. It’s a stack: a layered system of incentives, behaviors, and pressures reinforcing one another. To understand what’s happening, we have to look at the whole machine. Not to fix it. Not to condemn it. Just to see it and understand the full landscape of what’s really going on.

The diagram below shows the full structure.

Layer One: Training & Extraction

At the foundation is data.

Large language models are trained on vast corpora of human-created material: books, articles, forums, scripts, code, art captions, blogs. The legal status of this ingestion is still contested. Some call it learning. Others call it appropriation.

But regardless of the legal framing, one structural reality is clear: AI systems accelerated by ingesting existing human knowledge at scale. That acceleration created capability. Capability created product. Product created profit potential.

This is the ignition layer. Without it, the rest of the stack does not exist.

Layer Two: Generation & Misuse

Once trained, models can generate. And here is where the conversation shifts. The model does not independently author books. The model does not on its own open Etsy stores. The model does not make the decision to self-publish on Amazon.

Users do. Meaning humans.

Some users experiment. Some users create responsibly. And some discover arbitrage.

“Write a novel in the style of X.”

“Generate 50 motivational posters.”

“Create summaries and sell them for $0.99.”

This has nothing to do with intelligence, artificial or otherwise. People have been scamming and defrauding others for years, long before computers of any kind existed. What AI changed, though, was the incentive and ability to do so at scale. If the cost of production approaches zero and distribution remains open, volume becomes strategy.

The tool – Large Language Models – did not invent exploitation. It lowered the barrier.

Layer Three: Selective Guardrails

Here is the asymmetry many people sense but struggle to articulate:

Platforms heavily regulate certain kinds of output such as political persuasion, medical advice, reputational claims, and other high-risk categories. But when it comes to commercial impersonation or stylistic mimicry, enforcement is inconsistent or light at best.

This creates a structural contradiction: If a system can restrict topics for reputational reasons, or by way of “reducing harm,” it can also restrict predictable commercial misuse. Whether it does so depends not on capability, but on business calculus.

And so the questions become: Which harms are prioritized? And which are absorbed as collateral?

Layer Four: Marketplace Multipliers

Even exploitation does not scale on its own. It requires distribution platforms.

- Amazon.

- Stock art marketplaces.

- Streaming services.

- Content aggregators.

These platforms monetize volume and engagement. They are not designed to evaluate originality at philosophical depth. They are designed to move units.

Amazon’s Kindle store already struggles with large volumes of low-quality automated books. When AI output becomes cheap and abundant, platform economics amplify it. A single bad actor cannot flood a market alone.

Infrastructure makes flooding profitable.

Layer Five: Corporate Substitution

Now the ripple reaches labor. If AI-generated artwork is cheaper than commissioning a designer, AI copy is cheaper than hiring a junior writer, AI documentation replaces administrative staff, then corporations will optimize.

Not because they are villains, but because margin expansion is structurally rewarded. Shareholder expectations predate AI. Automation doctrine predates AI. AI simply expands automation into domains previously considered “creative” or “human.”

This is where displacement anxiety intensifies.

Layer Six: Capital Acceleration

Behind all of this sits venture capital and growth economics. AI firms are funded under speed assumptions:

- First-mover advantage

- Network effects

- Global competition

- Rapid scale

Slowing down is not neutral; it is financially penalized. When speed becomes survival,

deliberation becomes expensive.

This is why calls for “pause and reflect” struggle against funding models that demand acceleration.

Layer Seven: Legal & Regulatory Lag

Law does not move at startup velocity. Copyright frameworks were built around reproduction, not probabilistic modeling. Jurisdiction was built around physical territory, not cloud access. States experiment. Courts react. Appeals stretch for years. In the gap between innovation and doctrine, ambiguity flourishes.

Ambiguity favors those who can afford prolonged litigation.

Layer Eight: Cultural Drift

Something quieter is happening beneath the economics. We are shifting from:

- Authorship → Output

- Craft → Optimization

- Depth → Throughput

- Identity → Utility

If society begins to value “good enough, instantly” over “crafted, intentional,” markets respond accordingly. In other words, if nobody was buying it, it wouldn’t be profitable for sellers to create it. This is not technological. It is cultural.

And it may be the most powerful layer of all.

Layer Nine: Education & Skill Formation

When students use AI to write papers, generate code, and summarize readings, a new skill hierarchy forms. Students increasingly learn prompt orchestration rather than composition. They don’t need to read an entire tome, synthesize it internally, think about it critically and regurgitate what that synthesis now means to them all based on their own cognitive consumption.

It’s not necessarily less intelligent, but it shifts from craft mastery to orchestration fluency. The long-term effect on creative pipelines remains uncertain, but pipelines shape future industries.

Layer Ten: Existential Identity

At the outermost layer sits something more fragile. For many creators, writing is not employment. It is identity. The same is true of many with respect to their creative endeavors or jobs.

If machines replicate stylistic patterns, if markets reward volume over voice, if corporations substitute creation for generation, the question becomes less economic and more existential:

What remains distinctly human?

This is where the emotional intensity originates. Not from code, but from meaning.

The Reinforcing Loop

Each layer feeds the next:

- Training enables generation.

- Generation enables exploitation.

- Exploitation floods marketplaces.

- Marketplace pressure drives corporate substitution.

- Substitution rewards investors.

- Investor pressure accelerates development.

- Acceleration outruns law.

- Cultural norms adapt.

- The loop tightens.

No single actor controls the whole system, but every tier influences the rest.

The Structural Tension

Across the entire stack runs one persistent conflict:

- Speed vs. Deliberation

- Scale vs. Identity

- Profit vs. Provenance

- Automation vs. Purpose

These tensions are the fault lines. AI did not invent them. It exposed them. And that is the point of the diagram. Not to say what must be done, but to show that this is not a single-issue debate about theft or innovation.

It is an ecosystem of aligned incentives.

When people argue about AI, they are often arguing about different layers of the same stack. Seeing the full structure does not solve it. But it changes the conversation from outrage

to architecture.

So the next time someone argues about AI art, AI “slop,” or stolen jobs, remember the stack. Remember the layers. And know what’s really happening.

Money speaks. It’s telling us the true story through an AI lens.

Are we listening?

Framework note:

The AI Disruption Stack is intended as a structural model for analyzing AI incentives and impacts. Researchers, educators, and analysts are welcome to reference or reuse the framework with attribution. If you build on the model, I’d love to see how you apply it.

Leave a Reply

You must be logged in to post a comment.