The White House released its National Policy Framework for Artificial Intelligence March 20, 2026.

At first glance, it reads like what you would expect: a mix of safety language, innovation goals, workforce development, and civil protections. A balancing act. Something for everyone.

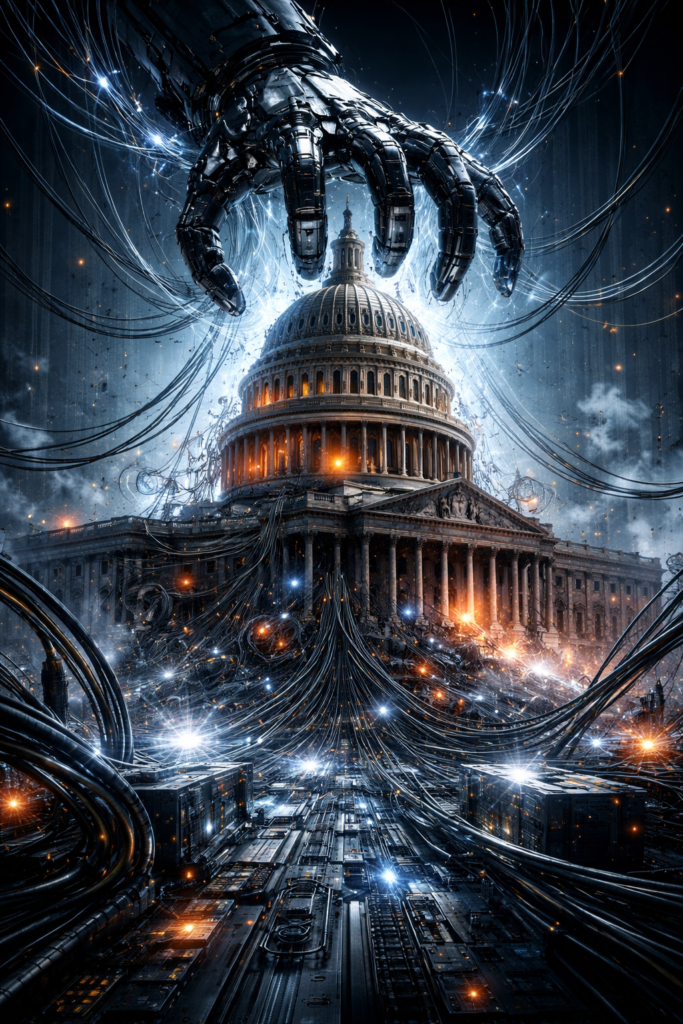

But if you read it closely – line by line, section by section – a different structure emerges. This is not primarily a safety framework. It is a power framework. And once you see that, everything in the document snaps into focus.

The Real Objective: Speed and Dominance

The organizing principle of the framework is not harm reduction. It’s not ethical alignment, or even public trust. It is, at its very core, acceleration.

The document repeatedly emphasizes:

- removing barriers to innovation

- accelerating deployment

- avoiding new regulatory bodies

- ensuring U.S. leadership in AI

These are the spine of this policy. I was hoping for framing that essentially stuck to AI being a tool to be governed carefully. Instead, what’s been presented is that AI is a domain to be won.

And in that context, everything else becomes secondary.

Control Without Liability

There is a quiet but consistent tension running through the entire framework. On one hand, there is a desire for control:

- shaping content environments

- guiding infrastructure development

- defining national standards

- influencing the boundaries of speech and platform behavior

On the other hand, there is a clear resistance to liability:

- warnings against “ambiguous standards”

- avoidance of “open-ended litigation”

- explicit protection for developers from third-party misuse

This creates a structural asymmetry: The system is meant to scale quickly, but accountability is not meant to scale with it.

This abstinence from accountability is deliberate.

The Safety Layer That Sits on Top

The framework opens with children, families, and harm prevention. Parental controls. Age verification. Protections against exploitation. All of it sounds reasonable. Necessary, even.

But the language is notably soft:

- “should” instead of “must”

- high-level principles instead of enforceable mechanisms

- limited discussion of technical implementation

This section functions less as operational policy and more as legitimacy scaffolding. It signals concern without constraining momentum.

In other words, it sounds like safety but it functions as permission to proceed.

Intellectual Property: Strategic Ambiguity

The document takes a particularly careful position on one of the most contested issues in AI: training on copyrighted material.

Unfortunately for the untold number of copyright holders whose intellectual property has been used without clear legal consensus or compensation, it does not resolve the question. Instead, it defers. It acknowledges disagreement. It points to the courts. It explicitly avoids legislative intervention.

This is a deliberate choice to allow current practices to continue while legal precedent develops slowly over time.

In other words: Keep the system running. Let the law catch up later.

In the meantime, user content and copyrighted material from across the globe continues to be absorbed into training systems at scale.

Free Speech, Sure…But Only in One Direction

The framework emphasizes protecting Americans from government coercion of platforms. It warns against state-driven censorship. It proposes mechanisms for redress.

But what’s missing is just as important as what’s included. There is little to no equivalent pressure placed on:

- platforms themselves

- AI developers

- private moderation systems

The framework creates a mechanism for Americans to seek redress from the federal government for censorship efforts, yes. But it provides no equivalent mechanism for platform-driven moderation decisions that silence speech.

The result is a directional constraint: Government influence is limited. Platform power remains largely intact.

What this means in plain language: the companies developing these AI are not being officially and federally held accountable for the decisions they make about the AI they’re deploying globally.

The Quiet Center of Gravity: Infrastructure

Buried in the middle of the document is one of its most consequential sections: infrastructure. We’re talking about data centers. Energy consumption. Permitting. Grid impact.

This is where the policy becomes tangible. The framework signals a willingness to:

- reshape energy systems

- streamline construction approvals

- prioritize AI infrastructure at scale

There’s real talk here about building the physical backbone of an entirely new industrial layer. But for communities already living next to large-scale data center construction, the framework is notably quiet.

The document commits to ensuring residential electricity costs don’t rise. But streamlining federal permitting while holding those costs flat raises an obvious question: whose costs are being absorbed, and how? The framework doesn’t answer that. It promises not to burden residential ratepayers while accelerating the buildout of energy-intensive infrastructure, but the math of who pays for what remains unaddressed.

Beyond electricity costs, there is little addressing environmental strain, local infrastructure impact, or quality-of-life concerns, and in places where these data centers are already causing problems, the policy is noticeably silent.

Workforce: Studies, Not Plans

Section VI addresses the American workforce, but the recommendations are revealing in what they don’t include.

Congress should:

- use “non-regulatory methods” to incorporate AI training into existing programs

- expand federal efforts to “study trends” in workforce realignment

- bolster land-grant institutions for technical assistance and demonstration projects

This isn’t a plan for managing the already-in-progress economic displacement. It’s a framework for passing responsibility downstream to individuals, existing education systems, and workforce training programs that will need to adapt on the fly.

There is no large-scale strategy for what happens when entire categories of work shift or disappear. Just studies. Training incorporation. Demonstration projects.

They’re talking about writing papers on what happens when you don’t need people to write papers anymore without addressing what happens to the people who used to write the papers.

The document treats workforce realignment as something to monitor and adapt to, not something to plan for or mitigate structurally. By the time those impacts fully materialize, the policy framework offers no structural response, only the expectation of adaptation.

That’s a bit too late for people who can’t eat studies or pay rent with demonstration projects.

The Sharpest Move: Federal Preemption

The most decisive and revealing section comes near the end, where the framework calls for a national standard that prevents a “patchwork” of state laws.

States are allowed to regulate outcomes:

- fraud

- child safety

- consumer protection

But they are explicitly limited in regulating:

- AI development itself

- model deployment at scale

- systemic design decisions

This creates a clean division: Centralized control over development. Decentralized responsibility for impact.

It is one of the most strategically coherent elements in the entire document.

What the Framework Assumes

Underneath the policy language is a clear worldview:

- AI is inevitable and must be accelerated

- Global competition defines the stakes

- Regulation is a potential liability to national advantage

- Harm should be mitigated, but not at the cost of speed

- Central coordination is more effective than distributed governance

These assumptions are embedded in what’s on offer. In other words, non-negotiable.

And that’s the problem.

When assumption #1 (inevitability) combines with assumption #4 (speed over harm mitigation), you get a policy framework that treats consequences as acceptable collateral damage rather than design constraints. When assumption #3 (regulation as liability) drives the structure, accountability becomes something to avoid rather than build in from the start.

It’s obvious what the framework prioritizes. What it fails to address now becomes impossible to address later when these assumptions are baked into the foundation. You can’t retrofit transparency into systems designed for opacity. You can’t add accountability to infrastructure built explicitly to avoid it. You can’t mitigate harms you’ve already decided are acceptable costs of winning.

As my grandfather used to opine, “It’s no use closing the barn door after the horses are gone.”

Go back and read that list of five things again. Perhaps most especially #4.

A Lot Is Missing

The most telling aspects of the framework are not what it includes, but what it leaves underdeveloped.

No meaningful model transparency requirements. Without transparency into how models are trained, what data they use, and how they make decisions, there’s no way to verify claims about safety, bias, or reliability. You can’t audit what you can’t see. You can’t identify problems in systems treated as proprietary black boxes. This isn’t an oversight. It’s a deliberate choice to prioritize trade secrets over public accountability.

No large-scale auditability. Even if transparency existed, the framework provides no mechanism for independent evaluation at scale. Who verifies that models work as claimed? Who checks whether safety measures are effective or performative? Without enforceable auditing standards, “trust us” becomes the operating principle for systems reshaping information, labor, and decision-making across entire sectors.

No enforceable reliability or alignment standards. The document talks about safety in abstract terms but establishes no binding requirements for how models should perform, what failure rates are acceptable, or what happens when systems cause measurable harm. Voluntary industry standards and self-regulation are mentioned. Enforceable benchmarks are not.

No systemic approaches to misinformation. The framework addresses fraud and impersonation at the individual level but offers nothing for addressing misinformation at scale. The kind of misinformation that shapes elections, public health responses, or social trust. This is treated as a downstream problem for platforms and users to manage, not a design challenge for the systems generating the content.

Read that again. For users to manage. Users. That means us. That means you are responsible for managing whether what the platform’s giving you is misinformation. How is that supposed to work exactly when you don’t even know what it’s been trained on, nor what rests inside its advertisements, safety layers and training biases?

No detailed plans for economic displacement. As covered in the workforce section, the framework offers studies and training programs but no structural plan for what happens when entire categories of work disappear. The assumption seems to be that displaced workers will adapt individually through retraining, but there’s no analysis of whether new jobs will exist in sufficient numbers, whether retraining is feasible for all affected populations, or what safety nets are needed in the transition. Lost your job to AI? Well…okay, but we’re #1 in AI!

No accounting for concentration of power among a small number of actors. A handful of companies control the models, the infrastructure, the data, and the capital required to compete at this scale. The framework does nothing to address this concentration. In fact, the federal preemption structure protects it by preventing states from regulating development practices that might distribute power more broadly.

These are downstream problems in a document focused on upstream acceleration.

TL;DR: Damn the torpedoes, full speed ahead. (Never mind the casualties.)

The Core Tension

The framework recognizes implicitly that AI is reshaping:

- authorship

- trust

- labor

- information itself

But it does not treat those as primary design constraints. Instead, it treats them as externalities to be managed after the fact.

If you were looking for a neutral governance document, this isn’t it. It is a first-mover strategy. A coordinated effort to:

- build quickly

- scale aggressively

- centralize authority

- minimize friction

All while maintaining just enough safety language to remain politically and socially viable. Safety theater rather than actual safety.

It is coherent. It is intentional. And it is incomplete.

The Mirror We’re Building

There is a line running beneath all of this that the document never quite says out loud: We are building systems that reflect how we think, decide, and prioritize at scale. And this framework makes one thing very clear:

We are choosing to build the system faster than we fully understand what it will reflect back at us.

That may be necessary. It may even be inevitable. But it is not neutral, and it certainly does not have the best interest of individual men, women and children at heart. Because the way this is written, and the direction it is clearly written in, is not without consequence to every one of us.

This is a document written from the logic of an AI arms race where speed, scale, and dominance take precedence, and the costs are deferred rather than defined.

As they say in the Fallout franchise: “War. War never changes.”

Even when it’s about Artificial Intelligence.

Leave a Reply

You must be logged in to post a comment.